Increasingly sophisticated deepfakes technologies are forcing lawmakers around the world to try to tighten legal frameworks to safeguard privacy and security.

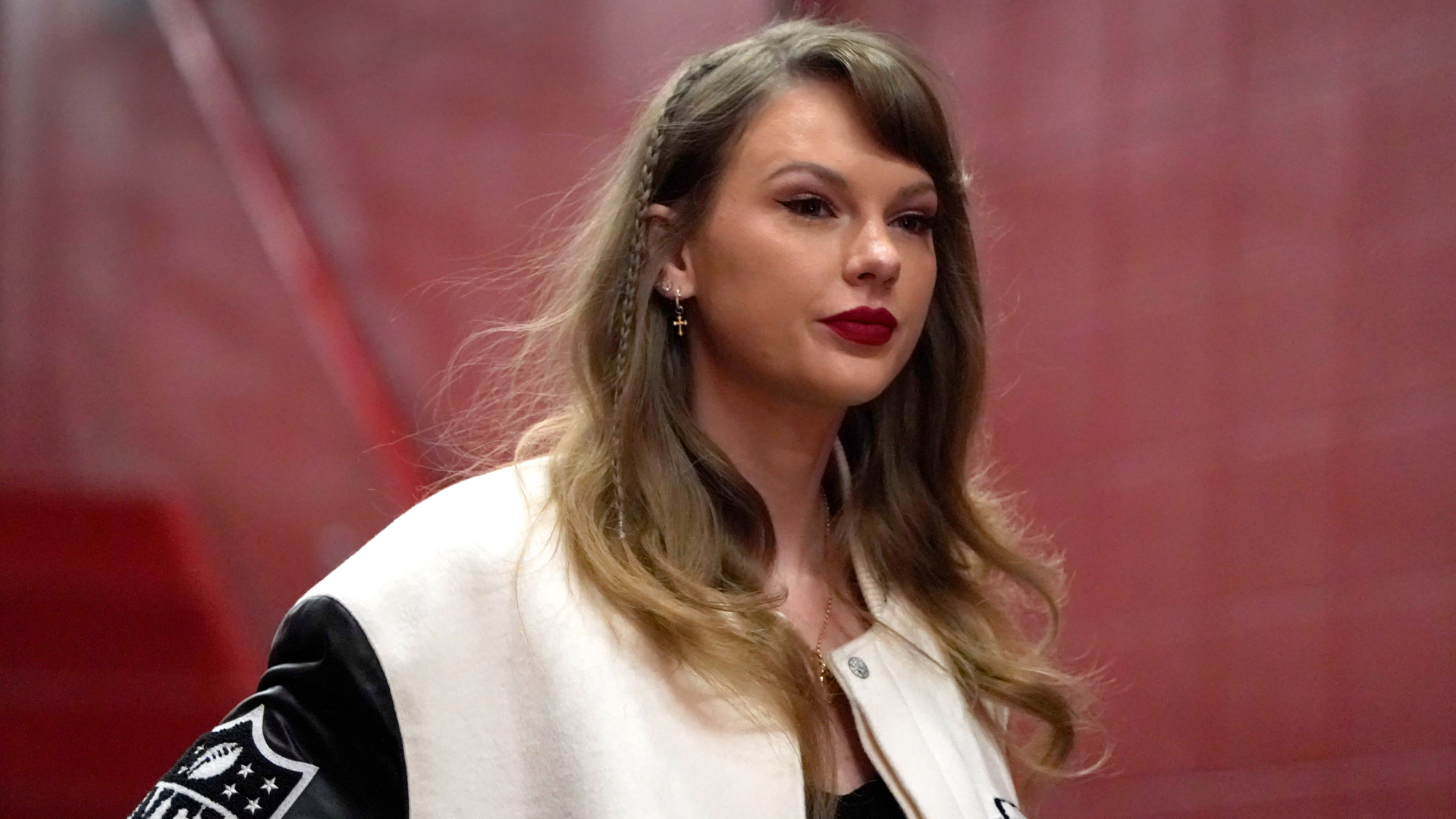

Recent deepfakes incidents – ranging from the release of sexually explicit images purporting to be of Taylor Swift to Chinese disinformation in the Taiwan presidential election – show the vast and complex nature of the deepfakes problem created by, or propelled by, artificial intelligence (AI).

The problem is getting worse as the technology needed to produce deepfakes becomes more available and easier to use.

Some governments are already moving on the issue. Sadly, Canada seems to be a step, or two, behind. Canada needs new legislation and other measures – yesterday – to protect its citizens and strengthen democratic resilience.

By contrast, the United Kingdom criminalized the sharing of deepfakes pornography as a part of its Online Safety Act last year, while the European Commission’s Digital Service Act also addressed non-consensual deepfakes porn.

In January, the No AI FRAUD Act was introduced in the U.S. House of Representatives in the hope of establishing a federal framework for action in the wake of Swift’s sexually explicit deepfakes.

In Canada, eight provinces have enacted legislation on the sharing of non-consensual intimate images – Nova Scotia, Alberta, British Columbia, Saskatchewan, Manitoba, New Brunswick, Newfoundland and Labrador, and Prince Edward Island – but only half of them target edited images.

The Intimate Images Protection Act in British Columbia gives prosecutors the power to pursue actors responsible for disseminating non-consensual fake intimate images online. However, they do not address the person responsible for creating them, nor big tech platforms that helped spread it.

The ease with which technology can now create deepfakes also poses a grave threat in terms of malign foreign influence, especially defamation campaigns against political candidates, or democracy and human rights activists, especially women, racial and sexual minorities.

The cautionary tale of Taiwan

China’s attempt to interfere in Taiwan’s recent presidential and legislative elections is a textbook example.

Deepfake videos of Lai Ching-tethe successful Democratic Progressive Party (DPP) candidate for president were disseminated online, falsely depicting him as supporting the coalition between the Kuomintang (KMT) and Taiwan People’s Party (TPP), as well as synthesizing an audio of him purportedly criticizing his own party.

These Chinese deepfakes distorted his original statements in a bid to influence public opinion and undermine the democratic process.

China tried to undercut Lai because he favours preserving the current status quo regarding the political status of Taiwan, arguing that it is already independent, as well as strengthening relations with the United States and other liberal democracies.

Although the Chinese disinformation campaigns did not have a significant impact on the result, their attempts showed how deepfakes could have a greater potential impact on election outcomes elsewhere, especially if the vote there is close.

As the technology spreads, this problem is only likely to get worse and there are few ways for the targets of deepfakes to combat their impact.

Few can mobilize like Taylor Swift’s supporters

In the case of Swift, her fans used hashjacking and complaints to X, formerly Twitter, to prevent further abuse after the initial publication.

The problem is that few, if any, human rights or pro-democracy activists and politicians who are being targeted by deepfakes have such a large number of self-mobilizable fans or supporters to protect them in the face of defamation.

In addition, it’s increasingly, and surprisingly, easy to create realistic deepfakes.

In late January, a deepfake audio robocall of U.S. President Joe Biden urging Democratic voters to skip the primary election in New Hampshire was estimated to be placed between 5,000 to 25,000 times. The caller ID falsely appeared to be coming from Kathy Sullivan, a former state Democratic chairwoman who helped run a pro-Biden political group.

Paul Carpenter, who says he was hired to make the deepfake audio, says it “took less than 20 minutes and cost only $1.” He got paid $150 to make it.

The low cost, the ease to produce and the general lack of legal consequence serve as a great incentive for anyone who has the skills to do the same thing and who has a target in mind.

Therefore, the federal government must introduce legislation that increases transparency and criminalizes the use of artificial intelligence in disinformation, especially the sharing of harmful deepfakes.

There are already precedents around the globe in terms of how laws and regulations could take shape. In addition to the U.S. No AI FRAUD Act, another option in establishing a federal framework against AI abuse is how the. U.S. Federal Communications Commission has made it illegal for robocallers to use AI voice clones.

Moreover, lawmakers should also look into possibilities for new legislation on, and voluntary disclosure requirements by, big tech companies and social media platforms.

For combating electoral interference, specific legislation that bans the use of deepfakes in influence operations would also be necessary. For instance, Taiwan’s Election and Recall Act added specific clauses aimed at preventing deepfake audio and video.

While Canadian legislation is needed sooner rather than later, it won’t be the entire solution. Instead, a whole-of-society approach is necessary.

Recently, I also proposed 10 concrete policy recommendations that Canada could learn from Taiwan’s decentralized network in strengthening its democratic resilience.

Canada is already far behind many other like-minded democracies. It is time for more policy and less rhetoric.